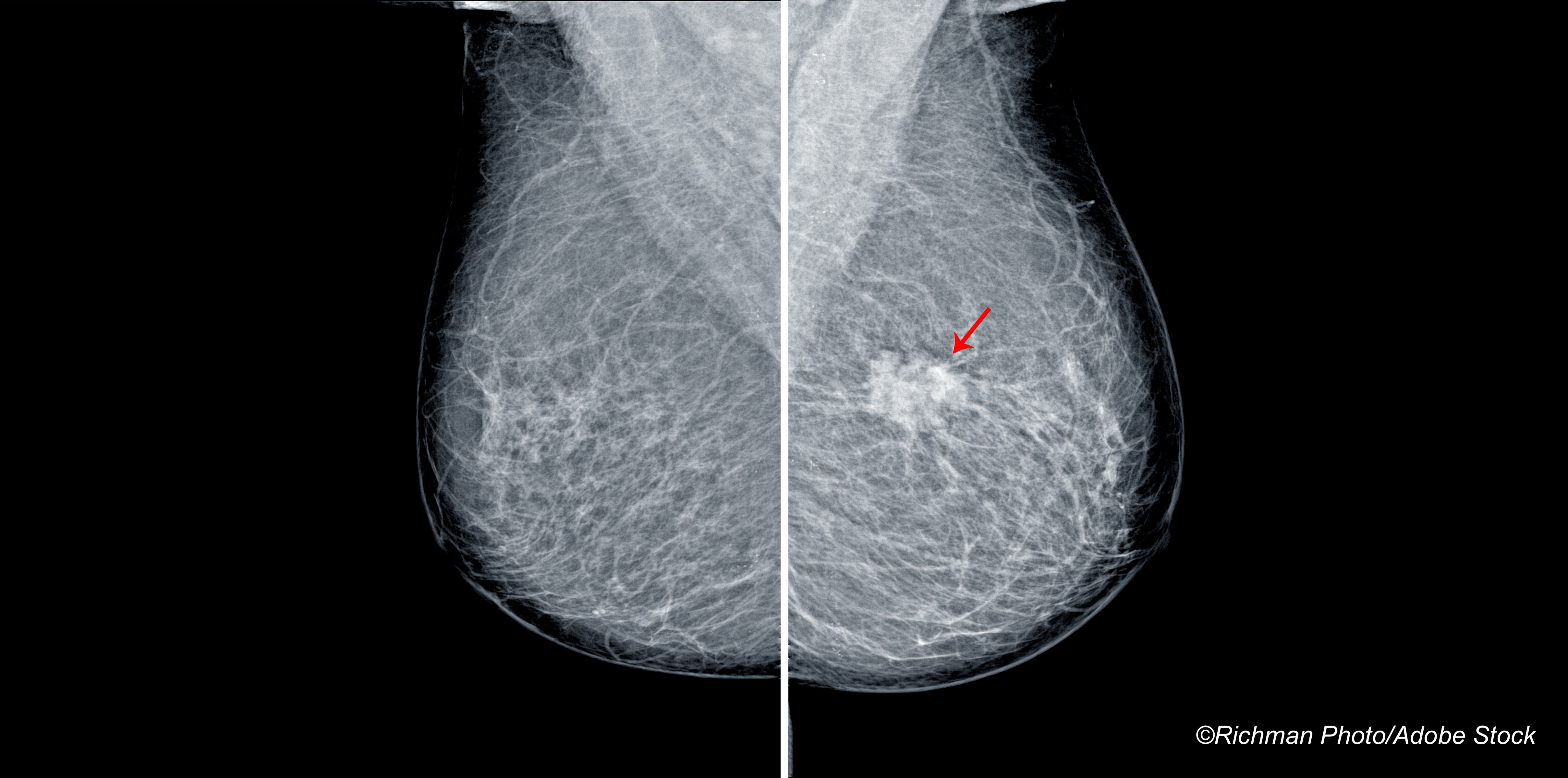

CHICAGO—A comparison study in which mammogram reviews by radiologists were compared to reviews of mammograms by an artificial intelligence (AI) algorithm found little difference in breast cancer detection rates between the radiologists and AI, researchers reported at the Radiological Society of North America.

Compared to what doctors found when they reviewed charts over a three-year period, the AI program reached about 90% sensitivity and 90% specificity and less than a 10% recall rate, reported Gerald Lip, MD, honorary consultant in radiology at the University of Aberdeen in Scotland.

Lip said that the physicians reading 12,120 scans in the 2016-17 period detected 100 positives; the artificial intelligence program achieved a sensitivity of 91%, a specificity rate of 91.11%, and a recall rate of 9.56% for the same period.

For the next period of 2017-19, the radiologists handled 27,824 screening cases which yielded 229 positives; the artificial intelligence algorithm achieved a sensitivity of 88.21%, a specificity rate of 90.92%, and a recall rate of 9.73% for this group of scans, Lip reported.

“The performance of this artificial intelligence tool shows a high degree of sensitivity, specificity, and an acceptable recall rate,” he said. “The findings demonstrate how an artificial intelligence tool would perform as a stand-alone reader and its potential to contribute to the double reading workflow.”

The researchers attempted to demonstrate how the AI tool would work in a real-world environment. To do that, Lip and colleagues scrutinized two sets of mammographic images stored within a trusted research environment at a university. The dataset comprised three years of consecutively acquired national breast cancer screening activity at a mid-sized center, Lip explained.

Data linkage was performed with a paperless breast screening reporting system which allowed for complete anonymization of data to facilitate external analysis.

The AI tool analysis followed a standard machine-learning format with a validation set of 12,120 cases, and a final test set of 27,824 cases. “No model alterations or re-training were conducted during or prior to the artificial intelligence evaluation,” Lip reported.

The researchers defined positive cases as pathologically-confirmed, screen-detected breast cancers, not including cancers detected between screening rounds—so-called interval cases.

Lip said the study affirms the successful use of a novel trusted research environment “to conduct robust evaluation of an external artificial intelligence tool on previously unseen, unenriched, sequential local data, whilst satisfying all governance and security requirement… Use of a trusted research environment enabled close collaboration between academic, industry and clinical teams to enable a large-scale, real-world evaluation of an artificial intelligence tool.”

“I have been reading scans for 40 years, and I think I am pretty good at it, but if you tell me there is an algorithm that can make me more accurate, I am all for it,” said Elliot Fishman, MD, professor of radiology, of oncology, and of surgery at Johns Hopkins University, Baltimore.

“At the end of the day, the radiologist is still responsible for making the final decision,” he told BreakingMED. “The artificial intelligence program may over-call some things, but if you are the radiologist, you just have to learn how to use it.”

Fishman said that there has been discussion about people not going into radiology for fear that artificial intelligence will force them out. “I don’t think we have to force this. We just have to learn how to use it to benefit our patients,” he said.

He suggested that artificial intelligence will change radiology. He noted that MRI changed radiology as well. He said he welcomed studies like the one from Scotland that push the envelope.

John McKenna, Associate Editor, BreakingMED™

Lip disclosed no relevant relationships with industry. His work is funded via UK grant money from Innovate UK in a program called iCAIRD.

Fishman disclosed relationships with Siemens and GE.

Cat ID: 235

Topic ID: 98,235,791,22,691,481,159,235